Blueshift Design

designs creative concerts, imaginative installations, and event experiences.

Design

We create visually compelling experiences for live events.

Programming

Programming for concerts, corporate events, and everything in between.

Writing

Narrative and copy that connects with your audience.

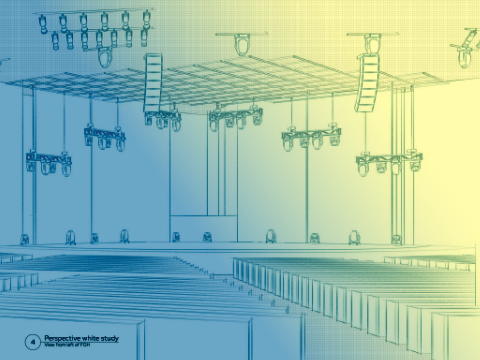

Show design

The separation between good and poor design, excellence and mediocrity, lies in attention to detail. Our experience covers all major aspects of the production design industry, with experience in not only set and visual design, but audio, video, and the details of emerging technologies as well. This gives us multi-faceted insights into all levels of the production process, from the light on the performers, to how the stage will look from various angles and elevations, to finding the right placement of design elements to avoid conflict with sound reinforcement.

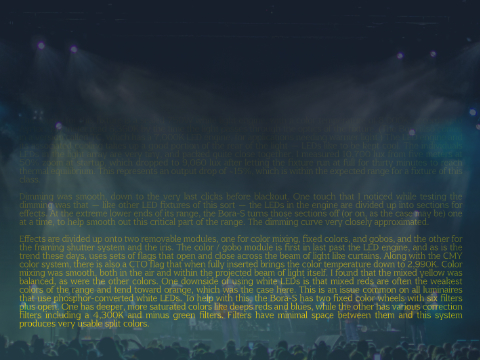

Programming

The difference between dynamism and listlessness, between lighting that engages or that which provides mere illumination is not whether or not a production has the newest, most expensive automated fixtures or the greatest abundance of moving lights; it is due to the skill and experience of the programmer. Tasteful and exciting lighting programming combines disciplines of color theory, visual layering, contrast, brightness, and even the physiology of the human eye to make an impactful and memorable statement.

Writing and consulting

With a memorable voice and occasionally even a degree of wit, principal Craig Rutherford has written dozens of technical fixture reviews, writeups for various concerts and special events, blog posts both here and as a guest writer, and as a guest speaker at some of the biggest production trade shows.